Lacking Intelligence About Our Intelligence

In October of last year, I went to my product and engineering leads with what felt like a straightforward question: Who on the team is using AI, and how are they using it?

I wasn't asking because something had gone wrong. I wanted my team to be using AI. We were pushing hard on adoption because I believed it was making us faster. I just didn't have a clear picture of how it was working in practice. Which agents had our employees installed? What were they using them for? Were we getting the most out of what we were paying for?

What I didn't expect was how hard it would be to answer these questions.The same individuals had built endpoint management tools most enterprises still run today. They went looking in our MDM and EDR tools that we had available to us, and nothing came back with a clean answer. The question I was asking fell into a gap that no tools in our existing stack were built to address.

That’s what led to developing visibility with Origin.

The three phases of AI adoption

I think about AI adoption in three phases, and I've experienced all three in the span of the last year.

Adopt

A year ago, my biggest problem as a leader was convincing people to use these tools in the first place. Claude Code had just launched, and ChatGPT had been around for a couple of years but hadn’t yet fully penetrated the way that we work. My job as CEO essentially became: "Please, just use AI."

During this phase, zero percent of my thinking was about monitoring. I wasn’t concerned about cost. I wasn’t thinking about risk. Getting adoption was my entire mandate.

De-constrain

Phase two is where I believe most organizations are right now: deconstrain. Everyone, or close enough, is using the tools, the mandate for myself and similar executives flip. Our job becomes making AI as accessible as possible and getting out of the way. We write an effectively open-ended check, encouraging our teams to use as much AI as they want to maximize their productivity and subsequently, that of the business.

The CTO of Uber recently disclosed that their organization had blown through their entire AI budget by April. That's not a mistake. That's removing constraints.

Rationalize

Where I am now and where I think most organizations are heading faster than they expect is rationalization. Which model for which work? Where is the spend actually going? Is what we're getting out proportionate to what we're putting in? This is the phase that exposes everything you didn't build in phase two, and the thing you most conspicuously didn't build is any way to see what your AI is actually doing.

You’re not buying software, you’re buying digital labor

Phase three tends to arrive as a budget conversation. The spend has grown large enough that you start to ask what we're actually getting for it. The question sounds like a cost problem, but it isn't. It's a management question, and the answer requires a fundamental reframe of what you think your AI spend actually is.

Most organizations would put it under software. It shows up as a vendor invoice, it renews on a schedule, and often gets routed to the same budget line as anything else with a SaaS contract. When you buy tokens, you aren't licensing a feature; you're buying the capacity or intelligence to do work.

As a business leader, think about how you would approach a large headcount increase. You would ask for a plan:

What roles are we going to hire?

What work are they going to do?

What does success look like for these roles in six months?

We agree on any of that before a single person gets hired. The process exists because labor is a consequential allocation decision, and those decisions deserve deliberate thought. AI credits deserve the same type of deliberate consideration.

Consider the simple fact that the models we use aren't uniformly priced. Running a frontier model versus a lighter one on the same task can differ by an order of magnitude in cost. For most of the year, I didn't manage that distinction at all.But now people on our team hit their daily intelligence limits and lose hours of productive work. The problem wasn't total spend; it was that the spend wasn't being directed. We have the equivalent of a payroll budget with no plan for it, and no understanding of how it’s being used, and we're wondering why the work wasn't getting done.

This is a problem we experience with 35 people. How does this compound with 1,000? 10,000? The more people, the greater the agentic workforce, the greater the challenge of knowing what it's doing.

How I observe our AI usage with Origin

This became the primary reason we built Origin, and it's the main way I understand what my team is doing with AI.

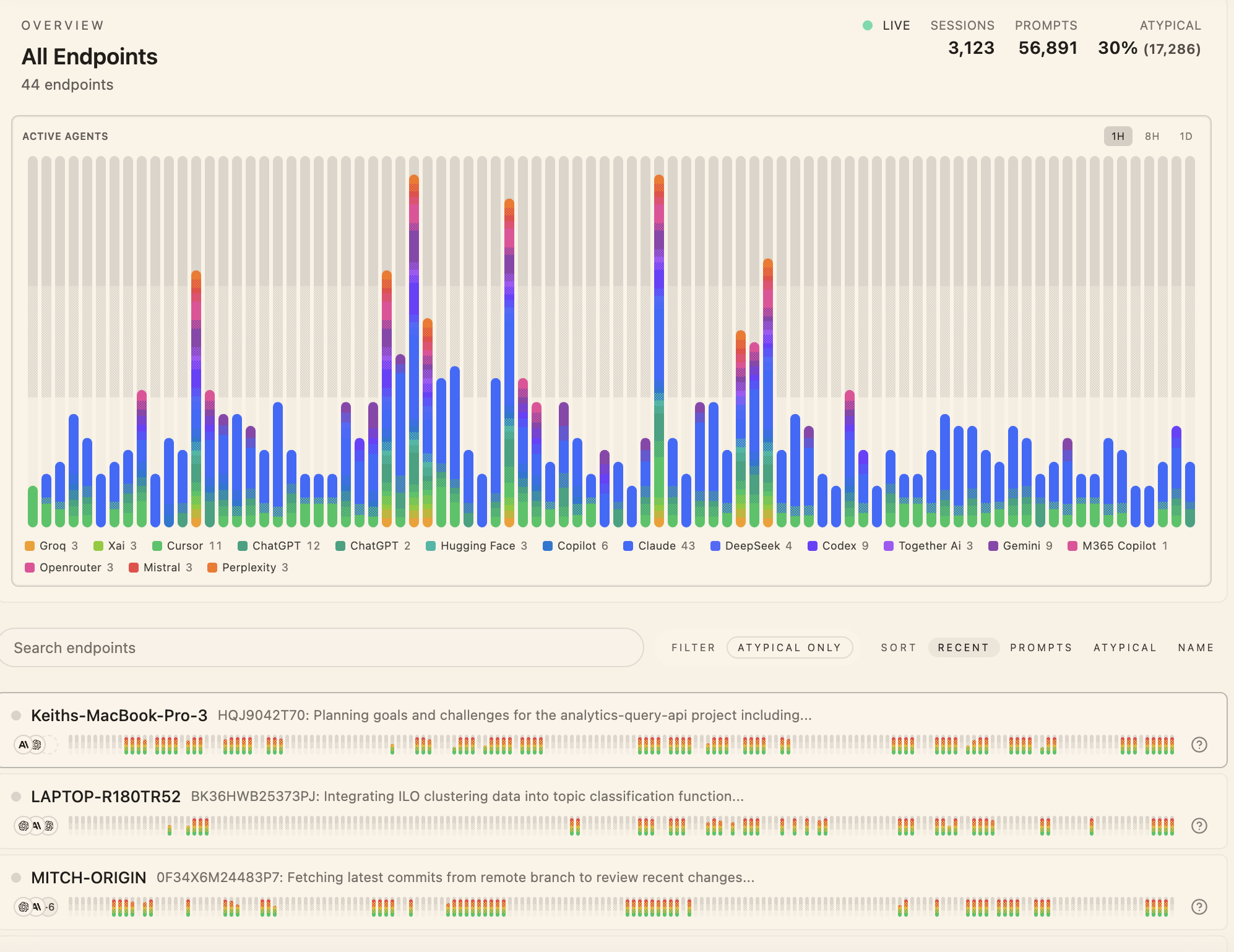

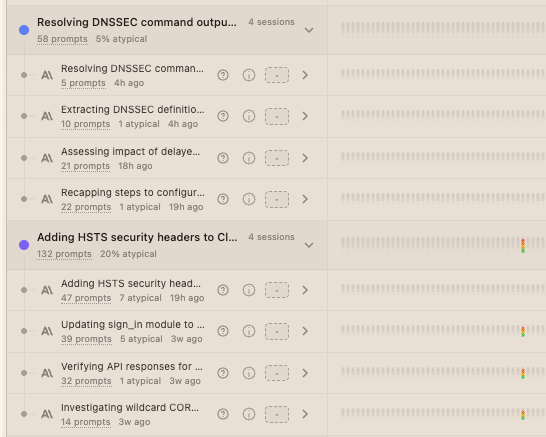

At the most basic level, I can see every agent running across every device — which tools people are using, what models they're running them on, and how that usage is distributed across the team. Below that, I can see the full activity attribution for any given endpoint: what commands were executed, which files were accessed, what network calls were made, and which agents were responsible for each. At its base level, this provides the certainty that the enterprise contracts I'm signing are being used by the team.

But above the raw activity is the ability to see how our team is actually spending this intelligence. Origin clusters the interactions by topic and project, so I can see that a meaningful portion of our AI usage was concentrated in particular projects or that someone is spending a specifically significant capacity of our intelligence on work that isn't being prioritized. What I would previously have to ask about can now be shown to me, instantly.

What this gives me is operational intelligence over a part of my business that was previously invisible. The ability to manage my AI workforce with the same intentionality I apply to any other consequential resource for our startup. Not because I distrust my team, but because I am responsible for the organization, and you cannot be responsible for something that you cannot fully understand.