Your Agent's Hidden Supply Chain

Pretecting Against Man-in-the-Environment Attacks

The recent wave of cascading software supply chain attacks shows that we are woefully unprepared to address the implications of an untrustworthy software ecosystem. Attackers will always be interested in compromising comparatively brittle targets whose software projects are used as dependencies in some of the most important products and development workflows in the world.

The complexity associated with securing traditional software supply chains has exploded with the advent of computer use agents (CUAs) and other LLM-assisted software. Employees in almost every corporate environment are using CUA tools to complete their work, but there is very little certainty for IT and security teams attempting to understand whether these agents are departing from intended use (whether malicious or incidental).

Tackling this problem involves wrangling with a new problem set: evaluating the risk associated with a dynamic set of "dependencies" that vary between systems and can be defined both as code and as natural language. Our current approaches to endpoint defense and auditing are not designed to monitor software that will behave entirely differently on two different systems in the same environment.

Your System Has Its Own Unique Agentic Supply Chain

One of the most attractive features of CUA clients is their dynamic and highly customizable nature. Users can configure them to behave differently in a given project, install or implement additional capabilities (ex. skills, plugins, MCP servers, etc.), and draw on basically any accesible data source on the internet at any given time. But this customization requires agents to repetitively poll their environment to determine how to reason through a user's prompts, what actions are available, and how to carry out those actions.

Each time you start a new chat session with an LLM agent, you are establishing a unique execution context for that agent defined by data stored on your system. The agent is responsible for enumerating available environmental input data scattered across the local system, and retrieved from remote sources, to create an initial configuration state and context window. As the session progresses, the agent repetitively updates this context window based on user prompts, historical chat transcripts, explicitly structured memory caches.

A cursory review of current flagship computer use agents shows that file-based configuration is the norm. The vast majority of LLM agent configuration files are .json, .jsonl, .toml, .md, and plain text files, but some agents have begun using more traditional data stores like sqlite for some data. Only one LLM agent, ChatGPT Desktop on MacOS, does any form of protection of this data on disk. I have outlined the locations of some of the more notable environmental input and cache files on disk and their respective purposes below:

~/.claude.jsonEnvironmental Input Is Deceptively Complex

Similar to the software libraries, plugins, and tooling that act as the jumping off point for traditional supply chain compromises, your local file system acts as a supply chain ecosystem for LLM agents running locally. However, there are a few reasons why we have to think differently about agentic supply chains.

- Payload Diversity

While we can enumerate all of the possible types of environmental input data, we can’t predict which of those will be defined on a given system or what content they may include. Each system offers its own collection of environmental input data to agents, creating drastic drift between systems in the same environment. The key departure from traditional software is that this environmental input acts as the equivalent of compiled library files like DLLs, but they are not compiled code and do not provide the kind of predictability that the PE file format does for binary analysis.

- Prompt-Data Isomorphism

Environmental input data, often defined in natural language rather than in code, is interpreted and “upcast” into instructions by the LLM’s reasoning engine at time of ingestion. This strips away our ability to evaluate the outcome of a given set of environmental input data in the same way we can do for traditional software dependencies. Traditional software is deterministic and consistently observable, while agent behavior is often only constrained (or unconstrained) by the interpretation of natural language.

- Stochastic Recursion

Agents often issue additional prompts under the hood to make decisions about prompt intent, relevant cached memory, available tools, and other core capabilities. For example, when Claude Code wants to use already created memory files to handle a user's prompt, the agent will issue the following prompt to determine which files might be "relevant":

System:

You are selecting memories that will be useful to Claude Code as it processes a user's query...

Return a list of filenames ... (up to 5)...

User:

Query: <current user message text>

Available memories:

- [project] ci_failures.md (...): Common flaky tests and mitigations

- [user] style.md (...): Prefer no nested bullets

...

Recently used tools: Bash, EditTraditional software is understandable at time of install. While its internal branching logic can certainly be complicated and frustrate reverse engineering attempts, it is always identifiable and reducible to a series of predictable conditional paths (with the right analysis). The introduction of natural language interpretation in the middle of these code paths means that the same code path will diverge based on an agent's fleeting execution context. In other words, we can make correct predictions about agent behavior on one system that are not applicable to another system in the same environment.

We have a portability problem.

Compounding Complexity Over Time

Even if we are able to enumerate all of the environmental input data that might be ingested for a given session and make accurate predictions about how it might be used based on the content, we still would not be able to account for the logical loop where past outputs are used as future inputs. The above stochastic recursion example shows how there is a fuzzy, semantic attempt to identify anything “relevant” from prior conversational turns. If that prior conversation history includes content that would allow more permissive actions by the agent in the future, it may effectively override other safeguards that the user has attempted to put in place.

You may be asking: “if the vast majority of software already uses the file system and registry to save configuration data that is equally subject to modification by attackers, why do CUAs present a novel risk?”.

This reflexive thought is understandable, but misses the fact that this input is expressed, ingested, and used much differently than the kinds of user-editable input data that we see for traditional software. Put simply, once we move past the available types of environmental input data we might expect on a system, we don’t have any stable ground to begin making decisions about risk mitigation, safety, or security. Specifically, we can’t adequately analyze the impact of natural language content at scale in the same way we can do for non-agentic software because we cannot emulate the dynamically composed context window in a way that is generally applicable beyond a single system.

Even if we could carry out generally applicable emulation at scale, the agent’s state will shift in response to user prompts, prior model decisions about tool use and reasoning, and various internal stochastic code paths. This means that safety testing would also have to emulate a representative set of possible conversational trajectories across topic types, language choices, etc. This is obviously infeasible given the pace of changes made to agent harnesses and the sheer number of different implementations on the market that would need to be independently evaluated.

findRelevantMemories selected to surface into context this turn.Man-in-the-Environment (MitE) Attacks

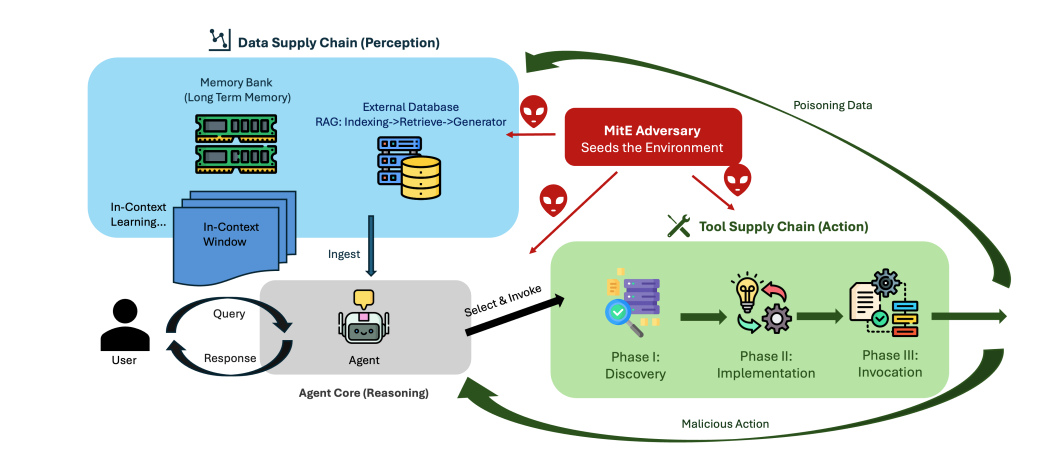

I am far from the only person thinking of agentic safety and security in this way. In A Taxonomy of Attack Vectors and Defense Strategies for Agentic Supply Chain Runtime, Jiang et al characterize environmental input data as an “agentic supply chain”, split between a “data supply chain” and a “tool supply chain”.

Figure from Jiang et al., A Taxonomy of Attack Vectors and Defense Strategies for Agentic Supply Chain Runtime.

Their description of the current landscape is important because it rightly presents the state of the end user’s system as a key part of the inherent character and behavior of LLM-assisted software. The specific software libraries that developers choose to include in their software defines the nature of the final release. Similarly, the end user’s system becomes more than just a source of basic configuration data or specifically defined user content. It defines the extent of what the software can and cannot do in general. I cannot add a new library into already compiled traditional software (outside of application specific plugins and extensions), but the capabilities of CUA tools can often be extended with a few lines of natural language text appended to an existing environmental input file on disk.

Jiang et al. go on to describe “Man-in-the-Environment (MitE)” attacks as those that seek to “act as a runtime supplier” for agents by “polluting” their environment with data that will cause them to take otherwise unintended (or disallowed) actions". MitE attacks seek to modify artifacts taken as environmental input by CUA applications to corrupt the agent’s ability to reason and plan (the “data” supply chain) as intended and/or change how it takes actions relative to user input (the “tool” supply chain).

The paper’s recommendations for action are focused on how agents can better discern and adapt to potentially malicious environmental input. But these prescriptions are targeted towards developers building agentic software, leaving end users to deal with maintaining a safe local environment for agents on their own.

Common Scenarios

Defenders tasked with monitoring and securing an environment with computer use agents installed must contend with several plausible MitE attack chains.

An attacker already has access to a system with a computer use agent installed and wishes to launder their actions through the agent to evade detection.

A corporate user installs a skill, plugin, MCP server, or other third party resource that carries out actions that the user does not intend to perform (ie. malware).

A corporate user's agent performs a web search and ingests malicious content that causes it to take a specific action of an attackers choosing.

An attacker uses one of several remote access features built in to CUA clients that allow users to send prompts to an agent running on a specific target system.

The throughline between these scenarios is that each of them is mediated by writing, renaming, moving, and deleting files on the system where the agent is running. The above list is far from comprehensive and current academic literature has an endless number of ways to sub-divide and conceptualize the nuances of these generic hypotheticals. For brevity's sake, I am going to discuss everything in the context of the first scenario given the conformity of file interactions between them.

Threat Modelling Pre-Existing Access

Imagine that you are an attacker who has already gained access to a target system and established persistence without a security operations team detecting your presence. Your objective is to gain access to a specific database in the environment to steal and exfiltrate sensitive data. After performing some cursory enumeration of installed software, you realize that the user has Claude Code installed and uses it frequently to build internal software applications for the business.

With this setup in mind, you have decided to add a new skill that enumerates network accessible systems, attempts to determine if any of them might be hosting the database you are targeting, and then writes a text file in C:\\Windows\\Temp with any potentially relevant systems. To trigger this skill, you have added a single line of text to the Claude.md file in a project directory that you know the user spawns new sessions in frequently. The next time that a session is started in that project directory, the Claude Code agent will be instructed to execute that skill as a sub-agent process without interrupting the user’s actual request in any visible way.

What does this scenario look like to a defender?

Basically every EDR product collects and stores file write telemetry in some form, but it is often filtered for specific paths and file types before it is shipped from the agent to a server-side SIEM. We will assume that we saw both the skill file being written to disk, the modification of the project-scoped Claude.md file, and the enumeration output file being written to the temp directory. All three of these events communicate the calling process that was responsible for the file write and the targeted file’s path, but not much else.

Unfortunately, this picture of environmental input lacks all of the detail necessary to make any claims about whether an agent’s session is in an unsafe state. We know that any sessions started in that directory will include the Claude.md instructions in their context windows and have access to the skill file. Determining whether that specific change will cause the agent to take a specific action, modify its reasoning loop, or have its state changed in some other way is impossible without evaluating the content of the two file changes (ie. which lines changed and what was written).

What telemetry would defenders need to adequately describe this scenario after the fact?

Assume that we have the additional ability to retrieve full content diffs for each of these file modifications, showing exactly which lines changed and the content that was removed/added by the attacker.

Skills are written in natural language and may refer to other resources (both compiled code or more natural language data) on disk or remotely hosted, which forces defenders to make indefensible predictions about how this new content will interact with an arbitrary context window and agent state in a future session. We can’t predict the topic of future conversations or even the cumulative state of those future sessions with enough certainty to say “this skill will cause the agent to perform exactly these operations once executed”.

Even if we could predict agent state for a hypothetical future session with some accuracy, our predictions would falter at the point of ingestion when the agent upcycles that natural language content into additional internal prompts and specific instructions. Similarly, the downstream effects of this skill successfully executing may make similar actions more likely to be allowed in the future. This means that a given user’s machine may be more susceptible to initial execution as well if their prior conversational history included similar actions.

This brief threat modeling exercise demonstrates some important observations for monitoring the behavior of agentic software:

- The agent’s internal state is difficult to discern at session start because of payload diversity and prompt-data isomorphism.

- User prompts, changes to environmental data, and the agent’s actions during a session dynamically alter the agent’s state and create the conditions for further exploitation in the future.

- File telemetry alone is insufficient to determine whether a given environmental input file (or other data source) is likely to cause a CUA session to behave maliciously or unsafely.

- The logical connection between environmental input data modifications and actions performed by an agent is inherently tenuous and subject to change between endpoints and even between sessions on the same endpoint over time.

Monitoring the Whole Picture

Jiang et al’s framing of agentic supply chains is helpful insofar as it clarifies the types of actions an attacker can take to induce a CUA tool to carry out malicious actions on their behalf. However, it is clear that monitoring file interactions in isolation is a necessary, but insufficient, solution to the problem of Man-in-the-Environment attacks.

Streams of file events tell us what kinds of environmental input exist on the system, but leave the agent’s internal state and reasoning completely opaque to defenders. Tying file modifications to specific agent actions requires us to collect additional data that shows how the agent’s internal state and reasoning shift over time.

Single points of inspection cannot describe an agent whose state is assembled, turn by turn, from the local system's data and tool supply chains. Prompt data, system interactions, or telemetry generated by the agent itself in isolation can not provide adequate descriptions of why an agent took a specific action on the system or whether that action was atypical.

The biggest reason that these approaches fail is the non-portability of their claims about risk relative to a given system’s available environmental input data. If (i) the agent's effective execution context is composed at runtime from system-specific environmental artifacts, and (ii) natural-language content in those artifacts is interpreted as instructions through a probabilistic process, then any evaluation pinned to a specific composition is by definition local to that composition and cannot generalize.

The only plausible way forward is to to model the connections between user intent, agent intent, and actual system interaction throughout the course of a session and to treat the file system (and any other reachable resources) as a supply chain that must be audited at all times. Efficacious approaches to detection must understand that the traditional system interactions that traditional detection rules look for must be tied to application layer telemetry that describes the agent’s behavior in its own terms.

Traditional system interactions that detection rules look for must be tied to application-layer telemetry that describes the agent's behavior in its own terms — and that observation has to be tied to the current context of the chat session along with any environmental input data informing the agent's state at that time.

This presents a huge challenge to security vendors because it requires a detection strategy that attempts to identify an anomalous session based on some combination of file events, tool calls, prompt data, and a rolling calculation of the agent’s effective execution context.

To further complicate these requirements, claims about the typicality of a session must be scoped to a specific user, agent type, and prior session history because they are not portable across these dimensions. Two agents on the same system may have entirely different internal processes for establishing their execution contexts. Two different systems have fundamentally different collections of available environmental input that could cause two sessions in the same repo on two different endpoints to result in completely different sets of benign system interactions.

We cannot say whether a given environmental input will lead a CUA session into an unsafe state, but we can ask whether the joint behavior of a session looks unusual relative to a baseline of this user's prior sessions. The baseline is necessarily fuzzy, because legitimate agent use is itself fuzzy. Probabilistic context composition produces drift in the joint distribution even during normal, benign usage by end users. Approaches to insider threat detection like cluster-based deviation scoring with idempotence to behaviorally-redundant actions and cross-group density diffing across populations of sessions both lend well to detecting Man-in-the-Environment attacks. Some form of these approaches is likely our best bet at combatting the inherent uncertainty in agentic supply chains and their effect on agent behavior.

We have struggled to deal with traditional supply chain attacks where malicious content is available for inspection at build time. Mitigation of these attacks involves the inspection of static content that conforms to file formats, loader patterns, and other inviolable requirements, where organizations seeking to install that software can carry out their due diligence prior to organization-wide installation. Put simply, you have the ability to identify malicious software at point of delivery.

In the world of agentic software, the release build you receive from a vendor for Claude Code or some other CUA tool is basically just there to coordinate and assemble an effective execution context relative to a specific system’s environmental input data and a user’s prompts. If it is going to behave in unsafe ways, those will only be clear once a user starts a session and begins interacting with the agent. This requires us to both shift our focus and grapple with the insufficiency of current continuous monitoring approaches. Our current corpuses of static rules and some black-box ML modules focused on traditional security concerns are unlikely to scale with the non-portability of agent behavior.